Linux中计算特定CPU使用率案例详解

来源:互联网 2026-04-02 12:43:31Linux中计算特定CPU使用率 需求解决方案拓展参考 需求 熟悉Linux的朋友都知道,用top命令可以轻松查看进程的CPU占用情况。要是想深入了解每个CPU核心的负载,操作也很简单:先运行top,然后按下数字"1"键,系统就会展示每个CPU的详细使用情况,就像这样: 不过,实际工作中我们常常会遇

Linux中计算特定CPU使用率 需求解决方案拓展参考

需求

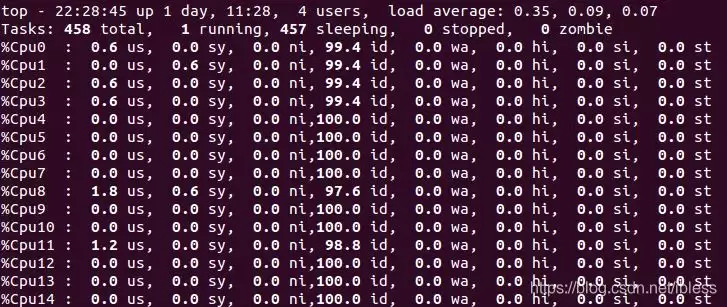

熟悉Linux的朋友都知道,用top命令可以轻松查看进程的CPU占用情况。要是想深入了解每个CPU核心的负载,操作也很简单:先运行top,然后按下数字"1"键,系统就会展示每个CPU的详细使用情况,就像这样:

不过,实际工作中我们常常会遇到更具体的需求:能不能精确计算出某个CPU核心的占用率呢?这个问题看似简单,背后却藏着不少门道。

解决方案

1. 背景知识

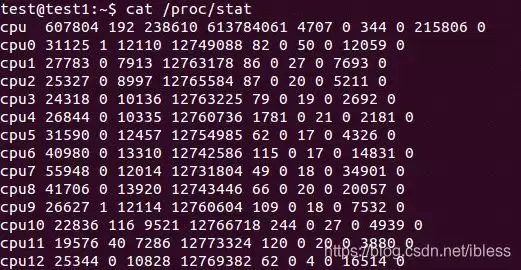

要解决这个问题,得先了解Linux系统的一个关键文件——/proc/stat。这个文件记录了每个CPU核心的详细使用数据,打开后你会看到这样的信息:

文件里那些以cpu(0/1/2/...)开头的行,后面跟着的十个数字各自代表什么含义呢?官方文档给出了明确的解释:

/proc/stat

kernel/system statistics. Varies with architecture. Common entries include:

user nice system idle iowait irq softirq steal guest guest_nice

cpu 4705 356 584 3699 23 23 0 0 0 0

cpu0 1393280 32966 572056 13343292 6130 0 17875 0 23933 0

The amount of time, measured in units of USER_HZ

(1/100ths of a second on most architectures, use

sysconf(_SC_CLK_TCK) to obtain the right value), that

the system ("cpu" line) or the specific CPU ("cpuN"

line) spent in various states:

user (1) Time spent in user mode.

nice (2) Time spent in user mode with low priority (nice).

system (3) Time spent in system mode.

idle (4) Time spent in the idle task.

iowait (since Linux 2.5.41)

(5) Time waiting for I/O to complete.

irq (since Linux 2.6.0-test4)

(6) Time servicing interrupts.

softirq (since Linux 2.6.0-test4)

(7) Time servicing softirqs.

steal (since Linux 2.6.11)

(8) Stolen time, which is the time spent in other operating systems when running in a virtualized environment

guest (since Linux 2.6.24)

(9) Time spent running a virtual CPU for guest operating systems under the control of the Linux kernel.

guest_nice (since Linux 2.6.33)

(10) Time spent running a niced guest (virtual CPU for guest operating systems under the control of the Linux kernel).

2. 计算具体CPU使用率

掌握了这些基础知识,计算CPU使用率就变得清晰明了了。具体来说,我们需要按照以下步骤进行计算:

总CPU时间 = user+nice+system+idle+iowait+irq+softirq+steal 总CPU空闲时间 = idle + iowait 总CPU使用时间 = 总CPU时间 - 总CPU空闲时间 CPU使用率 = 总CPU使用时间 / 总CPU时间 * 100%

这个计算框架非常实用,无论是计算单个CPU核心的使用率,还是统计系统整体CPU占用情况,都能轻松应对。

举个例子,如果要计算系统在某个时间段内的CPU使用率,可以这样操作:首先在t1时刻读取/proc/stat中的相关数据,计算出初始的总CPU时间(记为total1)和总空闲时间(记为idle1);然后在t2时刻再次读取数据,计算出total2和idle2;最后套用这个公式:

时间段CPU使用率 = ( (total2-total1) - (idle2-idle1) ) / (total2-total1) * 100%

简单来说,分子代表的是CPU实际工作的时间,分母则是总的时间跨度。下面这个脚本完美诠释了这种计算思路:

#!/bin/bash

# by Paul Colby (http://colby.id.au), no rights reserved ;)

PREV_TOTAL=0

PREV_IDLE=0

while true; do

# 获取总的CPU统计信息,去掉'cpu'前缀

CPU=(`sed -n 's/^cpu\s//p' /proc/stat`)

IDLE=${CPU[3]} # 只获取空闲CPU时间

# 计算总的CPU时间

TOTAL=0

for VALUE in "${CPU[@]}"; do

let "TOTAL=$TOTAL+$VALUE"

done

# 计算自上次检查以来的CPU使用率

let "DIFF_IDLE=$IDLE-$PREV_IDLE"

let "DIFF_TOTAL=$TOTAL-$PREV_TOTAL"

let "DIFF_USAGE=(1000*($DIFF_TOTAL-$DIFF_IDLE)/$DIFF_TOTAL+5)/10"

echo -en "\rCPU: $DIFF_USAGE% \b\b"

# 记录当前的总CPU时间和空闲时间,供下次检查使用

PREV_TOTAL="$TOTAL"

PREV_IDLE="$IDLE"

# 等待下一次检查

sleep 1

done

拓展

对于喜欢刨根问底的技术爱好者来说,了解内核如何实现/proc/stat文件会更有收获。在Linux内核源码中,相关的实现函数位于:

附注:内核版本3.14.69,文件为 /fs/proc/stat.c #include#include #include #include #include #include #include #include #include #include #include #include #include #ifndef arch_irq_stat_cpu #define arch_irq_stat_cpu(cpu) 0 #endif #ifndef arch_irq_stat #define arch_irq_stat() 0 #endif #ifdef arch_idle_time static cputime64_t get_idle_time(int cpu) { cputime64_t idle; idle = kcpustat_cpu(cpu).cpustat[CPUTIME_IDLE]; if (cpu_online(cpu) && !nr_iowait_cpu(cpu)) idle += arch_idle_time(cpu); return idle; } static cputime64_t get_iowait_time(int cpu) { cputime64_t iowait; iowait = kcpustat_cpu(cpu).cpustat[CPUTIME_IOWAIT]; if (cpu_online(cpu) && nr_iowait_cpu(cpu)) iowait += arch_idle_time(cpu); return iowait; } #else static u64 get_idle_time(int cpu) { u64 idle, idle_time = -1ULL; if (cpu_online(cpu)) idle_time = get_cpu_idle_time_us(cpu, NULL); if (idle_time == -1ULL) /* !NO_HZ or cpu offline so we can rely on cpustat.idle */ idle = kcpustat_cpu(cpu).cpustat[CPUTIME_IDLE]; else idle = usecs_to_cputime64(idle_time); return idle; } static u64 get_iowait_time(int cpu) { u64 iowait, iowait_time = -1ULL; if (cpu_online(cpu)) iowait_time = get_cpu_iowait_time_us(cpu, NULL); if (iowait_time == -1ULL) /* !NO_HZ or cpu offline so we can rely on cpustat.iowait */ iowait = kcpustat_cpu(cpu).cpustat[CPUTIME_IOWAIT]; else iowait = usecs_to_cputime64(iowait_time); return iowait; } #endif static int show_stat(struct seq_file *p, void *v) { int i, j; unsigned long jif; u64 user, nice, system, idle, iowait, irq, softirq, steal; u64 guest, guest_nice; u64 sum = 0; u64 sum_softirq = 0; unsigned int per_softirq_sums[NR_SOFTIRQS] = {0}; struct timespec boottime; user = nice = system = idle = iowait = irq = softirq = steal = 0; guest = guest_nice = 0; getboottime(&boottime); jif = boottime.tv_sec; for_each_possible_cpu(i) { user += kcpustat_cpu(i).cpustat[CPUTIME_USER]; nice += kcpustat_cpu(i).cpustat[CPUTIME_NICE]; system += kcpustat_cpu(i).cpustat[CPUTIME_SYSTEM]; idle += get_idle_time(i); iowait += get_iowait_time(i); irq += kcpustat_cpu(i).cpustat[CPUTIME_IRQ]; softirq += kcpustat_cpu(i).cpustat[CPUTIME_SOFTIRQ]; steal += kcpustat_cpu(i).cpustat[CPUTIME_STEAL]; guest += kcpustat_cpu(i).cpustat[CPUTIME_GUEST]; guest_nice += kcpustat_cpu(i).cpustat[CPUTIME_GUEST_NICE]; sum += kstat_cpu_irqs_sum(i); sum += arch_irq_stat_cpu(i); for (j = 0; j < NR_SOFTIRQS; j++) { unsigned int softirq_stat = kstat_softirqs_cpu(j, i); per_softirq_sums[j] += softirq_stat; sum_softirq += softirq_stat; } } sum += arch_irq_stat(); seq_puts(p, "cpu "); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(user)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(nice)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(system)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(idle)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(iowait)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(irq)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(softirq)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(steal)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(guest)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(guest_nice)); seq_putc(p, '\n'); for_each_online_cpu(i) { user = kcpustat_cpu(i).cpustat[CPUTIME_USER]; nice = kcpustat_cpu(i).cpustat[CPUTIME_NICE]; system = kcpustat_cpu(i).cpustat[CPUTIME_SYSTEM]; idle = get_idle_time(i); iowait = get_iowait_time(i); irq = kcpustat_cpu(i).cpustat[CPUTIME_IRQ]; softirq = kcpustat_cpu(i).cpustat[CPUTIME_SOFTIRQ]; steal = kcpustat_cpu(i).cpustat[CPUTIME_STEAL]; guest = kcpustat_cpu(i).cpustat[CPUTIME_GUEST]; guest_nice = kcpustat_cpu(i).cpustat[CPUTIME_GUEST_NICE]; seq_printf(p, "cpu%d", i); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(user)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(nice)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(system)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(idle)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(iowait)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(irq)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(softirq)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(steal)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(guest)); seq_put_decimal_ull(p, ' ', cputime64_to_clock_t(guest_nice)); seq_putc(p, '\n'); } seq_printf(p, "intr %llu", (unsigned long long)sum); for_each_irq_nr(j) seq_put_decimal_ull(p, ' ', kstat_irqs_usr(j)); seq_printf(p, "\nctxt %llu\n" "btime %lu\n" "processes %lu\n" "procs_running %lu\n" "procs_blocked %lu\n", nr_context_switches(), (unsigned long)jif, total_forks, nr_running(), nr_iowait()); seq_printf(p, "softirq %llu", (unsigned long long)sum_softirq); for (i = 0; i < NR_SOFTIRQS; i++) seq_put_decimal_ull(p, ' ', per_softirq_sums[i]); seq_putc(p, '\n'); return 0; } static int stat_open(struct inode *inode, struct file *file) { size_t size = 1024 + 128 * num_possible_cpus(); char *buf; struct seq_file *m; int res; size += 2 * nr_irqs; if (size > KMALLOC_MAX_SIZE) size = KMALLOC_MAX_SIZE; buf = kmalloc(size, GFP_KERNEL); if (!buf) return -ENOMEM; res = single_open(file, show_stat, NULL); if (!res) { m = file->private_data; m->buf = buf; m->size = ksize(buf); } else kfree(buf); return res; } static const struct file_operations proc_stat_operations = { .open = stat_open, .read = seq_read, .llseek = seq_lseek, .release = single_release, }; static int __init proc_stat_init(void) { proc_create("stat", 0, NULL, &proc_stat_operations); return 0; } fs_initcall(proc_stat_init);

参考

http://man7.org/linux/man-pages/man5/proc.5.html

https://github.com/pcolby/scripts/blob/master/cpu.sh

https://elixir.bootlin.com/linux/v3.14.69/source/fs/proc/stat.c

掌握了这些核心原理和方法,相信大家在处理CPU监控和性能分析时会更加得心应手。从基础的使用率计算到深入内核实现,这条技术路径为系统性能优化打下了坚实的基础。

侠游戏发布此文仅为了传递信息,不代表侠游戏网站认同其观点或证实其描述